Blogpost

Mar 21, 2026

Why Your Voice AI Flinches When Users Say "Mm-hmm" (And What Just Changed)

You're mid-conversation with a voice agent. It's explaining something. You say "mm-hmm" — the way you would with any human — and the agent stops dead. Stutters. Tries to restart. The flow is gone.

This is the most common failure mode in production voice AI and nobody talks about it. Not latency. Not hallucinations. Not the voice quality. The turn-taking.

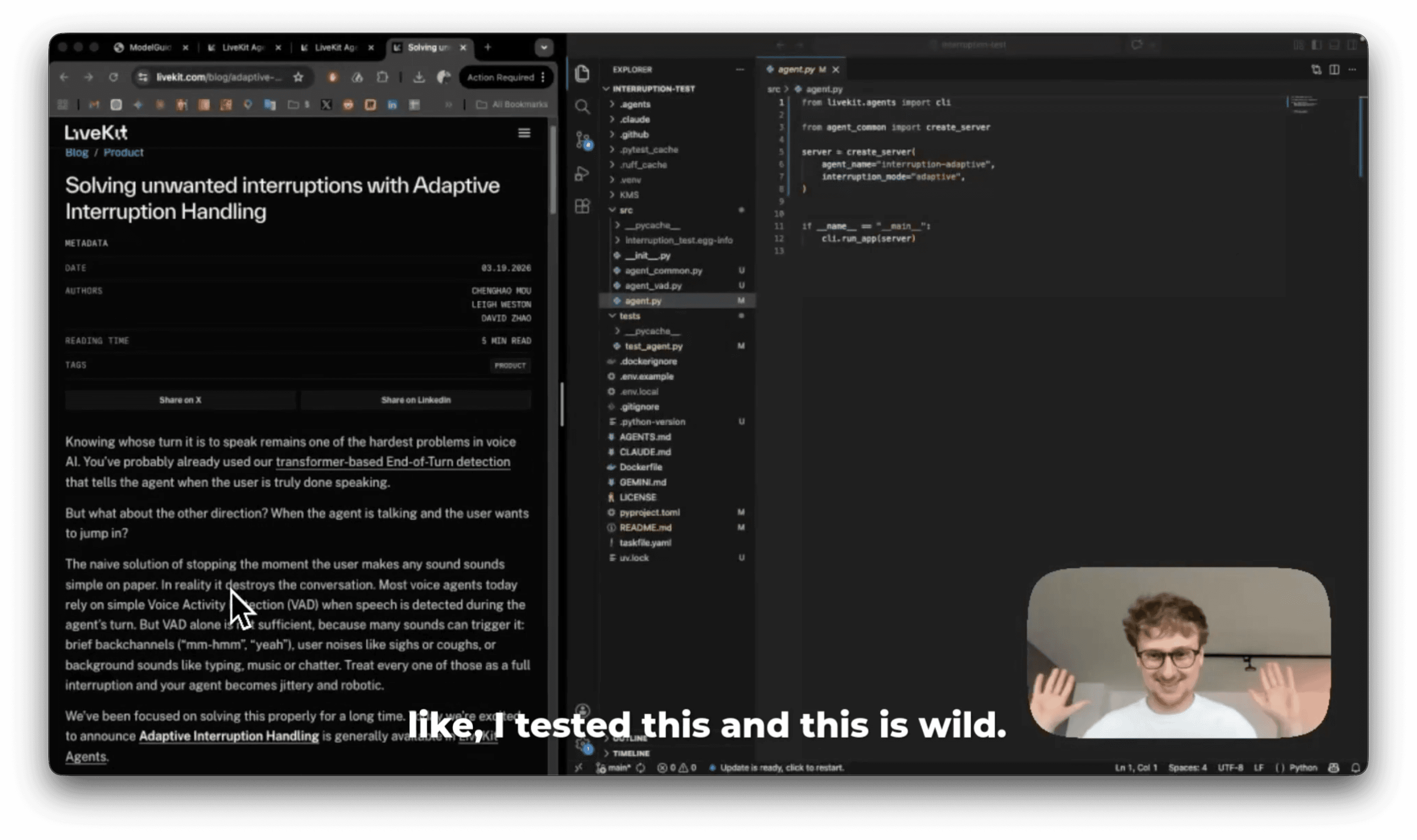

I tested a new approach from LiveKit that changes how this works. Here's what's actually happening, why it matters, and what the A/B comparison looks like in practice.

The problem: VAD treats every sound as an interruption

If you've read our previous post on voice AI architecture, you know the standard voice pipeline looks like this:

VAD (Voice Activity Detection) is the gatekeeper. It listens for sound and decides: is someone talking? If yes, it fires. The agent stops. The pipeline resets.

The problem is that VAD doesn't understand what it's hearing. It just detects sound. So all of these trigger it equally:

"mm-hmm" (backchannel — you're agreeing, not interrupting)

A cough

Keyboard typing

"yeah, yeah" (encouragement — keep going)

"wait, I have a question" (actual interruption)

A human would know the difference instantly. VAD can't. It treats a cough the same as "stop talking, I need to tell you something."

The result: your agent becomes jittery. It stops every few seconds. The conversation feels like talking to someone who flinches at every noise. This is what most voice agents in production sound like today.

What LiveKit shipped: a smarter layer on top of VAD

On March 19, 2026, LiveKit released what they call Adaptive Interruption Handling. It's not a replacement for VAD — it's a decision layer that sits on top of it.

Here's the architecture:

The model is a CNN (convolutional neural network) combined with an audio encoder. When VAD fires during the agent's turn, instead of immediately killing the agent's speech, the adaptive model analyzes the first few hundred milliseconds of audio and looks at:

Waveform shape

How sharply the speech starts (onset strength)

Duration

Pitch and rhythm patterns

It makes the call in about 30ms. The median amount of audio it needs to decide is 216ms. So the user barely notices — either the agent keeps talking (backchannel) or stops cleanly (real interruption).

The training data angle

Here's the interesting part of how they built this. They couldn't train on human-agent conversations because — as they point out — most voice agents handle interruptions so badly that the training data would be garbage. Backchannels barely exist in current voice AI interactions because users learn not to do them.

So they trained on human-to-human conversations instead. Hundreds of hours of real speech across multiple languages and topics. Then they ran the audio through an enrichment pipeline, mixing in various noise types to simulate real-world conditions.

The result generalizes across languages the model has never seen. That's a meaningful detail — it learned conversational dynamics, not language-specific patterns.

The benchmarks

From LiveKit's evaluation on a held-out dataset:

Metric | Result |

|---|---|

Precision | 86% |

Recall (at 500ms overlap) | 100% |

False VAD barge-ins rejected | 51% |

Faster than VAD for true barge-ins | 64% of cases |

Inference time | ≤30ms |

Median audio to trigger | 216ms |

That 51% number is the one that matters most for production. Half of all VAD interruptions were false positives — moments where the agent would have stopped unnecessarily. That's half of all those "flinch" moments eliminated.

The A/B test: I ran the same agent with both modes

I set up two identical agents. Same prompt (deliberately chatty — five to eight sentences per response so there's something to interrupt). Same models: Deepgram for STT, Cartesia for TTS. Same everything.

One difference: one line of config.

The repo is public: github.com/awala/livekit-adaptive-vad

VAD mode vs Adaptive mode demo

VAD mode: conversation death by backchannel

The agent starts explaining something about DNA. I say "yeah, yeah" while it's talking.It stops. Tries to restart. I say "wow" — dead again. "Hmm" — dead again.

Adaptive mode: the agent holds its ground

Same setup. I reconnect with the adaptive agent. Same chatty prompt. I try the same backchannels.

The agent powered through muttered reactions, ignored background noise, kept its flow. But the moment I made a clear, intentional interruption — "okay, stop" — it cut cleanly and pivoted.

That's the difference. It's not subtle. It's the difference between a conversation and a broken IVR with a nice voice.

How turn-taking actually works in voice AI

To understand why this matters, you need to see the full picture of turn-taking. It's not one problem — it's two:

Problem 1: When is the user done speaking? This is end-of-turn detection. The user is talking, and the agent needs to know when to start responding. Traditional VAD uses silence as a proxy. LiveKit previously shipped a transformer-based end-of-turn model for this. Deepgram Flux fuses this detection into the STT model itself, using prosodic and semantic signals together.

Problem 2: When is the user interrupting the agent? This is interruption detection. The agent is talking, and the user makes a sound. Is it a real barge-in or a backchannel? This is what adaptive interruption handling solves.

Most of the industry focus has been on Problem 1. Problem 2 has been largely ignored — mostly because VAD-only interruption detection "works" in the sense that it always stops when it hears something. The issue is it stops too much.

Both problems need to be solved for voice AI to feel natural. Solving only one gets you halfway — your agent either interrupts the user too early, or gets interrupted by them too easily.

Blog